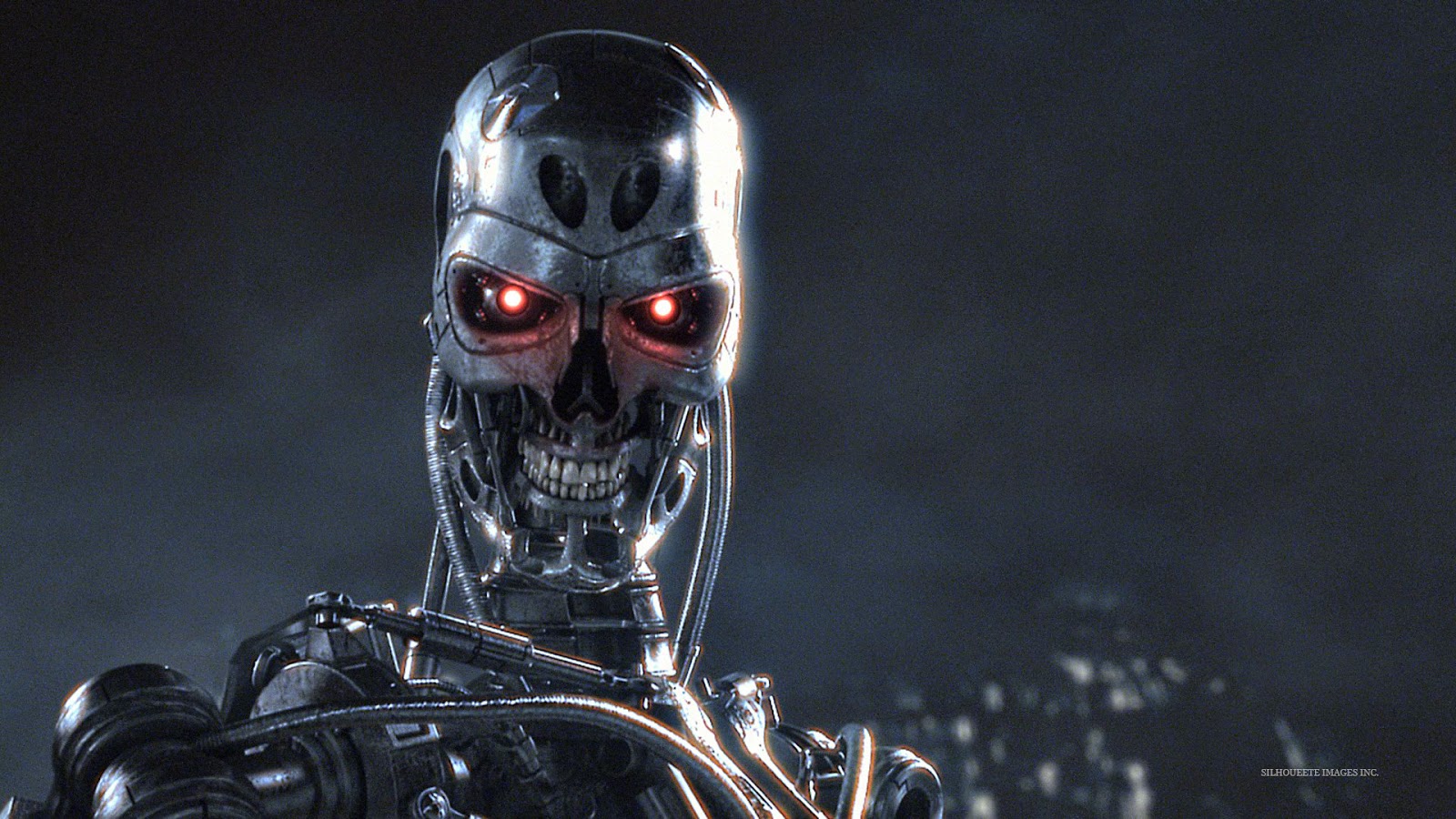

(IMG credit: moviepilot.com)

The (Possibly Kind of Maybe) Rise of the Machines

What with the recent announcement of a robot-staffed hotel opening in Japan, the internet has been awash with stories of Skynet and robot-human battles for supremacy. Elon Musk recently donated $10 million dollars toward research designed to prevent a hostile AI from evolving -- and with geniuses of his clout joining institutions like the Singularity University, the public is bound to be nervous. So just what's at stake?Even Hollywood has jumped on board the "singularity" wagon, with the recent Johnny Depp movie Transcendence and the upcoming Terminator reboots (featuring a wise-cracking Arnold Schwarzenegger, and yes I am totally pumped!) Bestselling tomes on the issue have made millions for authors like the inventor Ray Kurzweil (father of voice recognition, creator of the first working music synthesizer, founder of SingularityU, etc.) Indeed, it was his book, The Singularity is Near, that gave me my first in depth introduction to the issues and debates at hand -- and outlined Kurzweil's GNR theory of evolution.

|

| IMG: IMDB.com. |

In GNR, intelligent life evolves, and then takes control of its own evolution. Genetic manipulation comes first, with intelligent life (humans, in our case) being able to recreate their own physical bodies and eliminate disease. Then comes the nanotechnology revolution (Kurzweil highlights the work of Eric Drexler, considered by many to be the father of nanotechnology theory): any substance will be instantly creaeable, even the human body, and godlike power to manufacture anything, anywhere, in any quantity will be ours. Finally, both revolutions will play into the Robotics revolution, in which powerful robotics are blended with learning, conscious Artificial Intelligence to create powerful, immortal bodies. At this point, the Singularity occurs -- according to Kurzweil, humanity will reach an undefinable point of evolution where matter, space, and even time itself are utterly manipulable.

Granted, further research revealed that many of these admittedly exciting ideas are familiar from popular culture. The Singularity/godhood event are familiar from Arthur C. Clarke and Stanley Kubrick's collaboration, 2001: A Space Oddyssey (read the book first, it's better). Indeed, the most recent mindbender from the dark and brilliant mind of Christopher Nolan, Interstellar, features similar ideas (no spoilers here, go watch it for yourself! It's brilliant, stimulating, and annoying, like most of Nolan's work).

|

| Interstellar - IMG: theguardian.com |

Perhaps most intriguingly, Kurzweil-esque theories seem to match up fairly well with what we've pieced together of evolution (from Dawkins et al) -- RNA finds a way to replicate, in theory, causing abiogenesis (as Kurzweil emphasizes, all matter is patterns, and intelligent or self-organizing patterns are more powerful). It is this theme of growing ability to organize that really stands out about the whole GNR idea -- there's an air of rightness about it, a feeling that this was meant to be. The brilliant writers and speakers all seem to know what they're talking about, and the ramifications are so startling and exciting that you want to believe and fear not to do so.

But powerful objections remain. One of the most powerful comes from Jaron Lanier, a programmer who argues that humans are grossly incapable at programming, which means that programming a learning, self-aware AI is impossible, or will at the very least take a VERY long time. Others, like biologist PZ Myers, scathingly accuse Kurzweil and others of lazily glossing over the vast biological (esp. neurological) complexities involved in any "reverse-engineering" of the brain and thus consciousness. We simply don't know enough to even predict the nature of future evolution.

|

| Img: mcb.berkely.edu |

Finally, the most compelling (and comforting) argument that I have encountered synthesizes both viewpoints (an approach I always appreciate). Yes, computers are advancing rapidly -- at one way of thinking, argues Walter Isaacson in The Innovators, his masterwork on the history of computing. Isaacson posits that computers and humans are good at different and complimentary tasks, and while computers can grow infinitely in their niche, they will never be able to match the power of humans and computers working in tandem. He backs this up with hundreds of pages that trace the symbiotic nature of computers and humans, which was the original intention of many of the masterminds of the computer revolution (like JCR Licklider and Vannevar Bush).

So in the end, while it's always fun to kick back and watch Terminator, and while it's prudent to create safeguards against technology gone amok, and while it is further pleasant to await potential enhancements to human existence -- don't hold your breath. Make the most of the gifts of the age, be aware of possible danger, but no matter what, LIVE HUMANLY AND HUMANELY. Even if the meaning of the first word evolves in the next century! :)

No comments:

Post a Comment